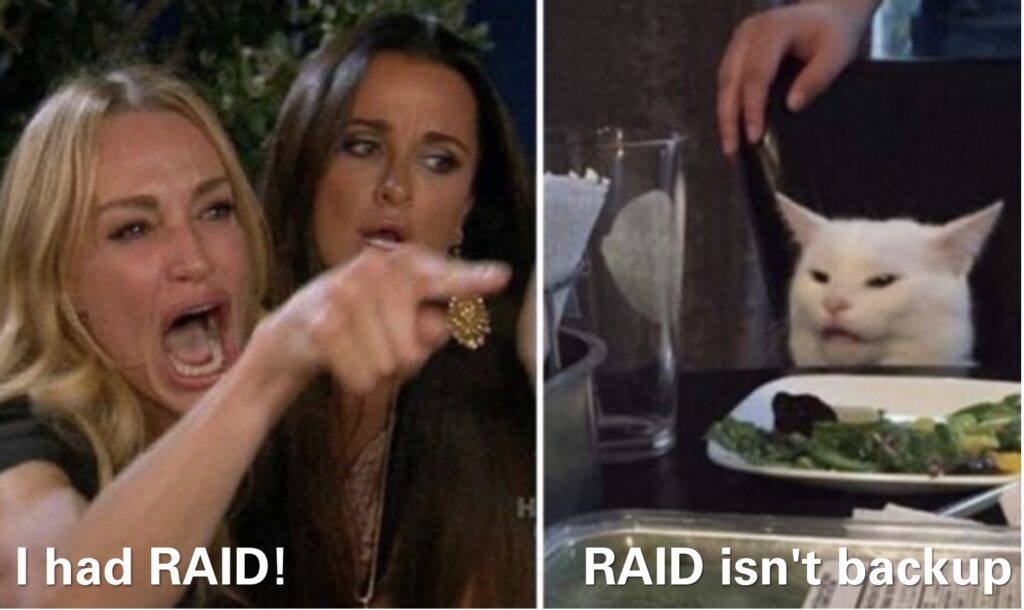

A saying so old that it has its own website and is heavily mentioned in forums filled similarly interested individuals. And it has even been meme’d.

So last month, as I was processing more pictures from the latest play date, I noticed several pictures in the main archive weren’t loading. Strange, but I didn’t pay any mind. See, my current Lightroom setup is a repurposed Thinkpad T470 from several years ago that just happens to have a ton of RAM. But the little i5 inside? It is a poor little 6200U. Skylake, but low voltage and from 2015. And the thermals on the Lenovo aren’t made for Lightroom—the poor little thing overheats if I run it with the lid closed. This all happened because my desktop started glitching badly and refusing to boot. It is either the memory or the video card and I don’t have the spare hardware to isolate it. And my 2014 vintage Mac Mini is similarly limited processing power wise, and even more so RAM wise.

The crazy part is of all these old computers, it was my desktop with a separate GPU that couldn’t power the Dell U3818DW at its native resolution, necessitating a GPU upgrade a few years ago. But to be honest, you can tell the laptop is hurting (mostly through graphics artifacts/glitching). The Mac Mini, however, somehow pumps out the video for both the 38″ Dell and my 24″ Asus, enabling a gigantic dual monitor setup that I hope to continue once I upgrade.

But back to the RAID issue.

As seen a few years ago, I migrated to a BTRFS setup. One big motivation was to avoid bitrot. XFS certainly didn’t have that capability, and over the years I have noticed a few glitches in my data. So even though I didn’t invest in ECC, my thinking was at least the filesystem should know about such things, and if possible, fix them.

So as the poor little Lenovo tries to render 1:1 previews for the latest picture load, I notice a few of the older pictures weren’t loading. I move most of the pictures over to the NAS to minimize the pictures stored on the laptop alone, so sometimes it is just the WiFi. But not this time.

Indeed, later on, I look in the NAS logs and I see crc errors. Lots of them. Some digging around in the btrfs device stats showed one of the drives in my RAID5 array giving me a ton of corruption errors. So I immediately run a scrub on the array to try and fix the data. Since this btrfs volume is setup with RAID5 data and RAID1C3 metadata, I wasn’t too concerned about the array crashing. But I wanted to get to the bottom of this.

Eventually, the scrub hits the bad file and fixes up the file. But as the scrub keeps running, it finds more and more errors. Which is a bit unsettling and now making me wonder how stable the array really is. A few more scrubs later and I notice the errors are transient as well. Sometimes the drive spits out tons of errors and sometimes it is humming along just fine. So because it is configured with RAID5 data, I ran a scrub on each of the individual devices. In general, RAID5 and btrfs comes with significant warnings because of a variety of issues documented on the development page. Scrubbing the individual devices in the RAID5 is one of the guidelines from the developers. And who am I to question them. But if you too are venturing down the btrfs RAID5 path, read through that email from the developers because it sets the ground rules, and foundational expectations.

Hrmm, more errors in the array, but all concentrated on one drive. Well, that’s good I guess. But then, weirdly, another one of the arrays in the NAS starts glitching. That’s odd too. By now, I’m having flashbacks to my desktop system glitching out with weird rebooting loops. So I dig the server out from its home at the bottom of the shelf and give it a good vacuum before I go in and take a look to see what’s going on. Since the errors are concentrated on one drive, I pull the problematic drive out and immediately see the problem—a loose piece of tape on the SATA connector.

Huh? Tape? What?

You see, the 10TB drives I rebuilt the array with were shucked. What’s that? Well, when you’re a hard drive manufacturer, you want to charge extra for those dorks that are trying to build a home NAS. You slap some extra warranty on the drive, and then you juice the price. But you still have to sell to the unwashed masses. So you take some drives and you throw them in a plastic case and sell those. But the unwashed masses have highly elastic demand, so you gotta cut your price to meet this month’s sales targets. So you shuck some external drives, take their internals, and use them in your NAS. But WD, particularly for their white label drives, decided to implement something slightly different.

In the new SATA specification, the ability to disable power to the hard drive is included. More particularly, this changes the behavior for the third SATA pin, which was previously tied to P1 and P2, according to Toms Hardware. And WD’s own documentation confirms this new aspect of the SATA standard. But fortunately, the Internet has found the fix: a piece of tape.

When I pulled off the SATA controller, the tape was wrinkled and not really blocking the third SATA pin properly. After a few minutes, I reapplied the tape and slapped the drive back into the system. And while I was in there, I checked all the cables on all the drives. The SFF-8087 to SATA cables I got when I built the server were barely long enough, so the tension is a little tight.

After closing up and booting back up, all the drives came online which was a good sign. And afterwards, I ran a series of scrubs on all arrays. No more errors! And Lightroom is happily pulling up pictures from the NAS. Just slowly, very slowly.

It’s been about a month now since that crop of errors and things have worked smoothly since. Knock on wood. Glad these old drives are still working well and have plenty of space for more play date pictures!